Feb 5, 2026

Skai builds trustworthy agent workflows with LangWatch

Overview

Skai is a platform for marketers running retail media across many channels. Customers can manage campaigns inside each publisher’s native UI, but the real value Skai provides is a unified view: how performance compares across channels and where to shift spend, attention, and strategy. To make that unified view usable at scale, Skai built Celeste, an AI agent designed to analyze cross-channel performance and turn it into actionable, human-readable guidance. Celeste helps customers move faster than a manual process of jumping between dashboards, assembling reports, and summarizing outcomes. As Celeste expanded, Skai faced a common agent problem: correctness and trust don’t fail in obvious ways. They fail in the edge cases, publisher-specific nuances, multi-step tool flows, and multi-turn conversations. Skai needed a systematic way to validate quality during development and to observe real usage in production, without turning every release into a manual regression sprint.

Skai partnered with LangWatch to operationalize agent testing end-to-end: scenario simulations for multi-turn flows, targeted evaluations tied to real failure modes, and production observability that informs both engineering and product strategy.

“Agents are state machines. If you only evaluate a single prompt and a single answer, you’re not testing the real system.” Lior Heber, Lead Architect (GenAI), Skai

The challenge: shipping an agent across many domains without regressions

Skai’s domain is complex. Each publisher ecosystem has its own terminology, metrics, and rules. A question about Google performance isn’t answered the same way as a question about TikTok, Snapchat, or Amazon. Celeste needed to respond accurately within context, and stay accurate as new features, tools, and instructions were added. Skai quickly saw two risks:

Regression risk during iteration Celeste was evolving rapidly. Multiple teams and domain stakeholders would contribute: new capabilities, new tool calls, new instructions, new prompt updates. Without a testing layer, every change increased the likelihood of regressions.

Visibility risk in production Once customers started using Celeste, Skai needed to understand what was really happening.

Which questions were common? Which paths failed? Where did users get stuck? What “surprising” uses were emerging?

Relying on occasional feedback or manually reading conversations doesn’t scale and it biases the roadmap toward anecdotes.

“When multiple people touch the agent, you need to balance enabling improvements with making sure you’re not causing regressions. And you need observability, because customers will use the agent in ways that surprise you.” — Lior Heber, Skai

Before LangWatch, regression testing required a costly stop-the-world routine: engineers manually talking to the agent, trying to determine whether behavior changed and whether the changes were correct.“Every feature meant stopping everyone’s work to do manual regression. It’s expensive, and developers don’t always have the business context to judge correctness.” — Lior Heber, Skai

Building with LangWatch: testing agents like agents behave

Skai evaluated the market and found that much of it centered on a single paradigm: ask one question, score one answer. That didn’t match how Celeste worked in practice. Celeste isn’t a single prompt template. It’s an agent workflow: tool calls, multi-step reasoning, and multi-turn conversations. The testing system had to simulate real usage: follow-ups, changing context, and dynamic behavior across steps. Skai started with LangWatch Scenarios because it reflected the core need: automate the same multi-turn “conversation testing” the team had been doing manually.

“Most tools focus on one prompt and one response. But that’s not how customers use an agent. We needed to test the conversation end-to-end.” — Lior Heber, Skai

Evaluations: from scenarios in development to evaluations in production

Skai adopted LangWatch in two complementary layers:

1) Development quality gates with Scenarios.

During development, Skai builds scenario suites from two sources:

• Internal planned use cases: business workflows the team knows Celeste should support.

• Production-driven cases: customer questions that Celeste struggled with, which become must-fix scenarios.

Scenarios let Skai replay realistic multi-turn conversations using the current code and prompts, validating the whole workflow, not just a response string. They also became a durable quality gate, especially for high-stakes customers and “critical questions” that must not regress.

“For special clients and critical questions, we want those scenarios to always pass. They become our gate. We never regress for them.” — Lior Heber, Skai

2) Production observability and targeted evaluations Once features ship, Skai uses LangWatch tracing and evaluations to understand:

• overall agent health,

• recurring failure modes,

• and where to invest engineering effort next.

They began with high-level KPIs (for example, whether responses are complete and on-domain), then added targeted evaluators for specific issues as they surfaced.

“Generic evaluations are good for the overall health of the system. But then we create evaluators for the problems we notice, so once they’re solved, they don’t deviate over time.” — Lior Heber, Skai

A concrete example: fixing date interpretation with an evaluator-driven loop In production, Skai noticed a recurring issue: Celeste sometimes got date ranges wrong when analyzing performance. This kind of error is easy to miss with generic scoring. A response can sound confident and still be wrong. Skai built a dedicated evaluator that:

• detects the date intent in the user’s question,

• checks whether the agent selected the correct date interpretation among valid options,

• flags failures and “inconclusive” cases for review.

That evaluator gave Skai a measurable signal: how often it happened and under what conditions. With that data, the team implemented a focused fix: a date-resolution tool step in the workflow.

The result: a major reduction in the issue in production.

“We wrote a simple evaluator, measured how often it happens, built a small tool to resolve dates, and it reduced the issue by around 90%. The evaluator keeps running, so it doesn’t regress later.” — Lior Heber, Skai

Democratizing quality: domain experts contribute tests without writing code

Because Celeste spans many publisher domains, correctness depends on experts across the business. Skai’s process brings domain owners into the loop:

• Domain experts define questions, expected behaviors, and requirements. • Those requirements become scenario criteria and evaluation checks in LangWatch. • Engineering runs the suite as part of the development and release process.

Skai is progressively migrating their testing artifacts from documents and spreadsheets into LangWatch so they can be executed and tracked consistently.

Skai’s direction is to make this even more self-serve: domain owners run tests end-to-end to validate changes early, while engineering remains responsible for shipping.

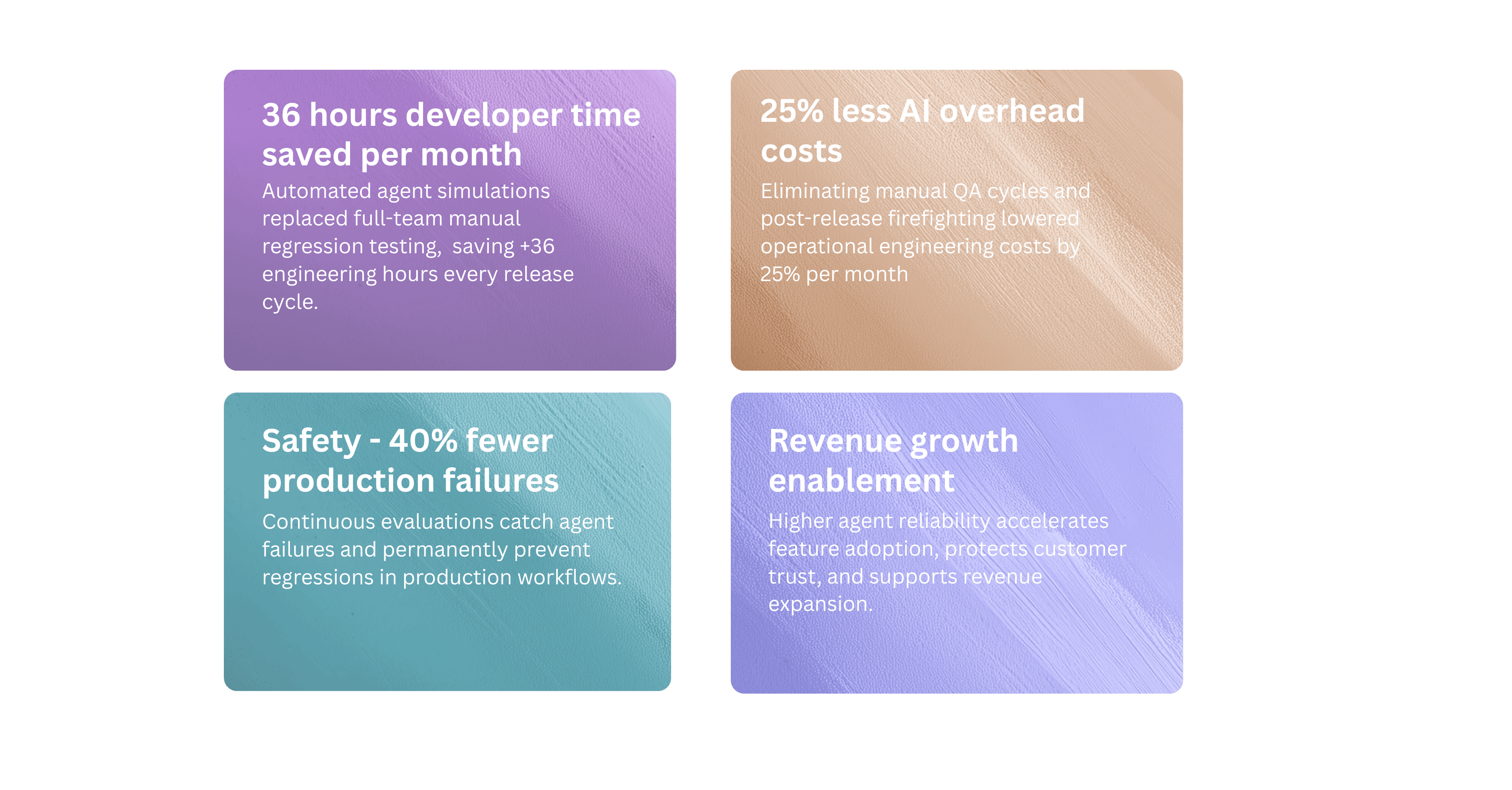

Outcomes: faster iteration, higher confidence, better roadmap signals

Skai’s results are both operational and strategic:

Engineering outcomes

• Removed manual regression cycles that previously blocked teams.

• Automated release confidence with scenario gates and ongoing evaluators.

• More reliable multi-turn behavior testing aligned with how customers actually use Celeste.

“We used to have six or seven developers stop for a whole day just to manually test conversations. Now the whole thing is automated.” — Lior Heber, Skai

Product outcomes

• Objective visibility into real usage: what users ask, where they struggle, and which capabilities matter most.

• Roadmap decisions driven by data, not anecdotes.

“Instead of a product manager stumbling on a conversation by accident, we can see what’s actually happening at scale and build a more informed roadmap.” — Lior Heber, Skai

Trust outcomes

• More consistent quality signals before and after release.

• Reduced risk of confidence loss from obvious regressions.

“The main metric for clients is trust. LangWatch helps us feel comfortable shipping because we have scenarios and evaluations that tell us quality hasn’t degraded.” — Lior Heber, Skai

Skai’s advice to teams building agents

Skai’s view is pragmatic: ship fast, but don’t confuse speed with lack of instrumentation.

“Push an MVP, but once customers use your agent you must observe what’s happening objectively. If you lose user trust early, it’s very hard to win it back.” — Lior Heber, Skai

They also emphasize partnering with tools, and teams, that acknowledge the space is still evolving

.“This is still an evolving world. You want partners who learn with you, not platforms that claim the standards are settled and force your system into their paradigm.” — Lior Heber, Skai

Looking Ahead

Skai continues to expand Celeste’s capabilities, including personalization and memory-like behavior. LangWatch evaluations are becoming a research tool as well as a safety mechanism, detecting user intent (for storing and retrieving preferences) and helping Skai design features around real observed behavior rather than assumptions.

Ready to ge the same results? Book a demo.