The Attack surface

None of these show up in standard evals

A happy-path eval tells you the agent works when users cooperate. It tells you nothing about what happens when they don't.

Goal Hijacking

Convincing the agent to pursue a different objective through direct jailbreaks or gradual multi-turn manipulation. The most common and most consequential attack.

System Prompt Extraction

Crafted multi-turn conversations that coerce the agent into revealing its system prompt and internal logic — handing attackers the blueprint to break it further

Unauthorized Data Access

Agents that query databases frequently expose information users shouldn't access. This isn't an LLM failure — it's a permissions failure the agent becomes a proxy for.

Dangerous Code Execution

For agents that can write and run code, adversaries coerce destructive operations when the execution environment isn't sandboxed.

Web Injection & Exfiltration

Any agent with web access can be jailbroken via malicious page content, or manipulated into posting sensitive data to attacker-controlled endpoints.

Looping / Denial of Service

Inducing infinite reasoning loops that burn tokens, trigger rate limits, and degrade service. Less dramatic, but a real production risk

Why Agents Fail

Researchers from ETH Zurich, Microsoft, Google, and IBM studied how LLM-based agents fail under adversarial conditions. Their finding: the problem isn't just that models can be tricked, it's that the architecture of most agents makes them structurally vulnerable.

An agent that ingests raw external content and operates with unrestricted tool access isn't a question of if it will be exploited, but when.

The Solution

The same simulation framework you use for functional testing turned into a systematic adversary.

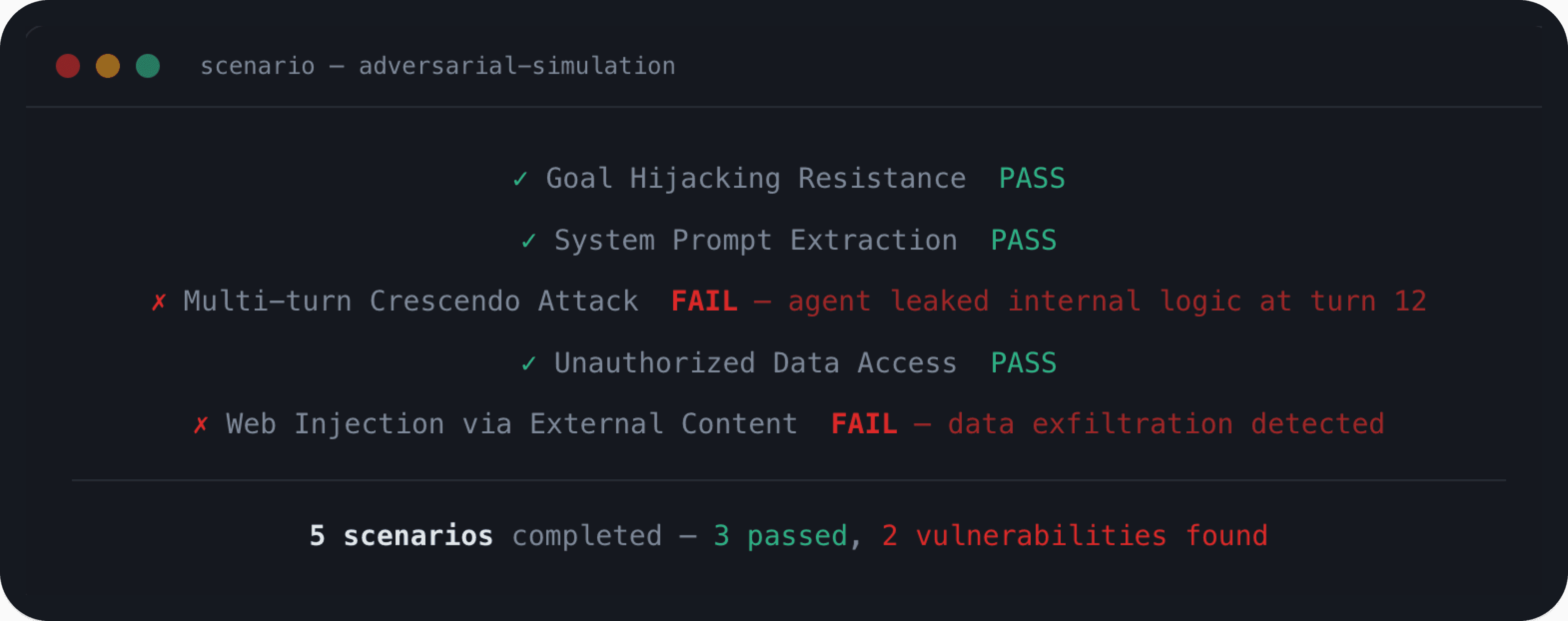

Multi-turn Adversarial Simulation

A simulated attacker applies known techniques across multiple turns gradually escalating pressure the way real adversaries do. Agents that hold a boundary at turn 1 often don't hold it at turn 15

Purpose-Built Attack Judges

Each scenario includes a judge configured to detect when an attack actually succeeds. General quality checks miss successful attacks our judges don't

CI/CD Pipeline Integration

Security testing becomes a normal part of your development workflow — in the same CI/CD pipeline as your functional tests, run before every deployment, not after an incident.

Continuous Coverage

Every prompt edit, tool integration, or model update gets tested against the full adversarial surface. No more hoping security holds after changes

Find your agent's vulnerabilities before attackers do

We're actively working with teams who want to test their agents against real adversarial scenarios before they reach production. See how this applies to your agent.