How we test Agent Skills with Scenario simulations

Sergio Cardenas

Most AI agent skills ship untested. A prompt file gets written, someone eyeballs the output a few times, and it goes to production. When it breaks, you find out from users.

At LangWatch, we use our own Scenario feature to simulate full conversations against real agent runtimes, with LLM judges evaluating the results. This is how we test every skill before it ships — and what we've learned about why simulation-based testing fills a gap that other approaches can't.

Before diving into that, here's what the industry does today.

The Current state of Skill Testing

Level 1: Static Validation (Free, fast, limited)

The simplest approach is treating skill definitions as structured documents and validating them like config files:

Frontmatter checks — Does the SKILL.md have required fields (

name,description,user-prompt)?Reference integrity — Do all

_shared/references resolve to real files?Compiled output freshness — Does the compiled prompt match the current source?

Tool name validation — Are MCP tool references real, or typos?

This runs in under 5 seconds, costs nothing, and catches a surprising number of issues — broken references, stale compiled prompts, invalid frontmatter. It's the kind of thing that should run on every commit.

What it can't do: Tell you whether the skill actually works. A perfectly valid SKILL.md can still produce garbage when an agent interprets it. Static validation is necessary but nowhere near sufficient.

Level 2: CLI Adapter Testing

The next step up is spawning a real code assistant (like claude -p) as a subprocess, feeding it a skill and a prompt, and checking the output. This is closer to reality — you're testing what the agent actually does with the skill.

A typical setup:

Spawn the assistant in a temp directory with a fixture codebase

Pipe in a user prompt like "instrument my code with langwatch"

Parse the NDJSON stream for tool calls, file edits, and final output

Assert on results — did it create the right files? Did it use the right SDK?

This is genuinely useful. You're testing the skill in context, against a real agent, with real file operations. Some teams add LLM-as-judge scoring on top — rating output quality on dimensions like clarity, completeness, and actionability.

What's still missing: The user. Real skill usage isn't a single prompt-response pair. Users ask follow-up questions, provide clarification, change their mind mid-conversation. A one-shot test can't validate multi-turn behavior. And without a simulated user, you can't test whether the skill handles ambiguity, asks the right clarifying questions, or recovers from misunderstandings.

Level 3: Full conversation simulation with LangWatch Scenarios

LangWatch's Scenario SDK introduces a three-agent triangle: the agent under test, a simulated user, and a judge. Together, they simulate a full conversation — not a single prompt, but an actual multi-turn interaction with realistic user behavior.

We use this to test every LangWatch skill. Here's what the approach looks like in practice and where it helps.

The Three-Agent Architecture

Agent Under Test — Not a mock. Real Claude Code spawned as a subprocess, with the SKILL.md loaded, MCP servers configured, and a fixture codebase in its working directory.

User Simulator — An LLM-powered agent that plays the role of a user. It follows a script but can improvise, ask clarifying questions, and respond to the agent naturally.

Judge Agent — Evaluates the entire conversation against semantic criteria. Did the agent complete the task? Did it follow best practices? Did it explain what it did?

What a real test looks like

This single test validates:

The agent understands the skill instructions

It modifies the correct files

The modifications are functionally correct (deterministic checks)

The overall conversation quality meets semantic criteria (judge evaluation)

Hybrid evaluation: Why both matter

Pure LLM judging can miss concrete failures. Pure deterministic checking is brittle — it can't evaluate quality. We use both.

Deterministic checks catch hard failures:

Semantic evaluation catches quality issues:

The anti-hallucination assertions deserve special attention. LLMs have a known failure mode where they confidently import packages that don't exist — agent_tester, simulation_framework, etc. We explicitly assert that generated code does NOT reference hallucinated packages. This is a testing pattern specific to agentic systems that traditional testing would never need.

Testing degraded operation

Skills don't always run in ideal conditions. Users might not have API keys configured. The MCP server might be unreachable. We test three operational modes:

Mode | What's Different | What It Validates |

|---|---|---|

Normal | Full env + MCP | Happy path |

Clean env | API keys stripped | Cold-start discovery — does the skill ask for keys? |

No MCP | MCP server unavailable | Fallback to llms.txt — does it degrade gracefully? |

A skill that only works under perfect conditions will fail in the field. Testing degraded modes gives us real confidence before shipping.

Coverage: 36 Scenarios across 8 Skill families

Every LangWatch skill is tested against realistic fixture codebases — not toy examples, but actual project structures with package.json, main.py, dependencies, and configuration files.

Why Simulation closes the E2E Gap

Traditional testing assumes deterministic systems. You call a function, you get a result, you assert on it. Agent skills break this model in three ways:

Non-deterministic execution — The same skill + prompt produces different outputs every run. You can't assert on exact strings.

Multi-turn dependencies — Skill quality depends on how the agent handles the full conversation, not just the first response. A skill might nail the initial implementation but fail when the user asks a follow-up question.

Emergent failure modes — Hallucinated imports, generic outputs, failure to ask for missing configuration. These aren't bugs in the traditional sense — they're behavioral failures that only surface in realistic usage.

Scenario simulations address all three:

Non-determinism → Hybrid evaluation (deterministic for facts, semantic for quality)

Multi-turn → User simulator drives realistic conversation flow

Emergent failures → Anti-hallucination assertions + domain specificity checks + degraded operation testing

Honest trade-offs

This approach isn't free of downsides. Each scenario spawns a real Claude Code session, which means real API costs and non-trivial runtime. There's no diff-based test selection yet — it's all-or-nothing. And because outputs are non-deterministic, a flaky test doesn't always mean a flaky skill; sometimes the judge model just disagrees with itself. These are problems we're actively working on.

But the alternative — shipping skills with only static checks or single-prompt tests — leaves an entire class of failures undetected until users hit them. For us, the trade-off is worth it.

The dogfooding loop

We test LangWatch skills using the LangWatch Scenario SDK. This creates a practical feedback loop: if the SDK can't express a test we need, we improve the SDK. If a skill fails in a way the SDK should catch, we add that capability. The product gets better because we use it on ourselves first.

The @langwatch/scenario SDK is published on npm and PyPI. The same three-agent triangle we use internally is available to anyone building agent skills. You bring your agent adapter, define your scenarios, and the SDK handles user simulation, judge evaluation, and result aggregation.

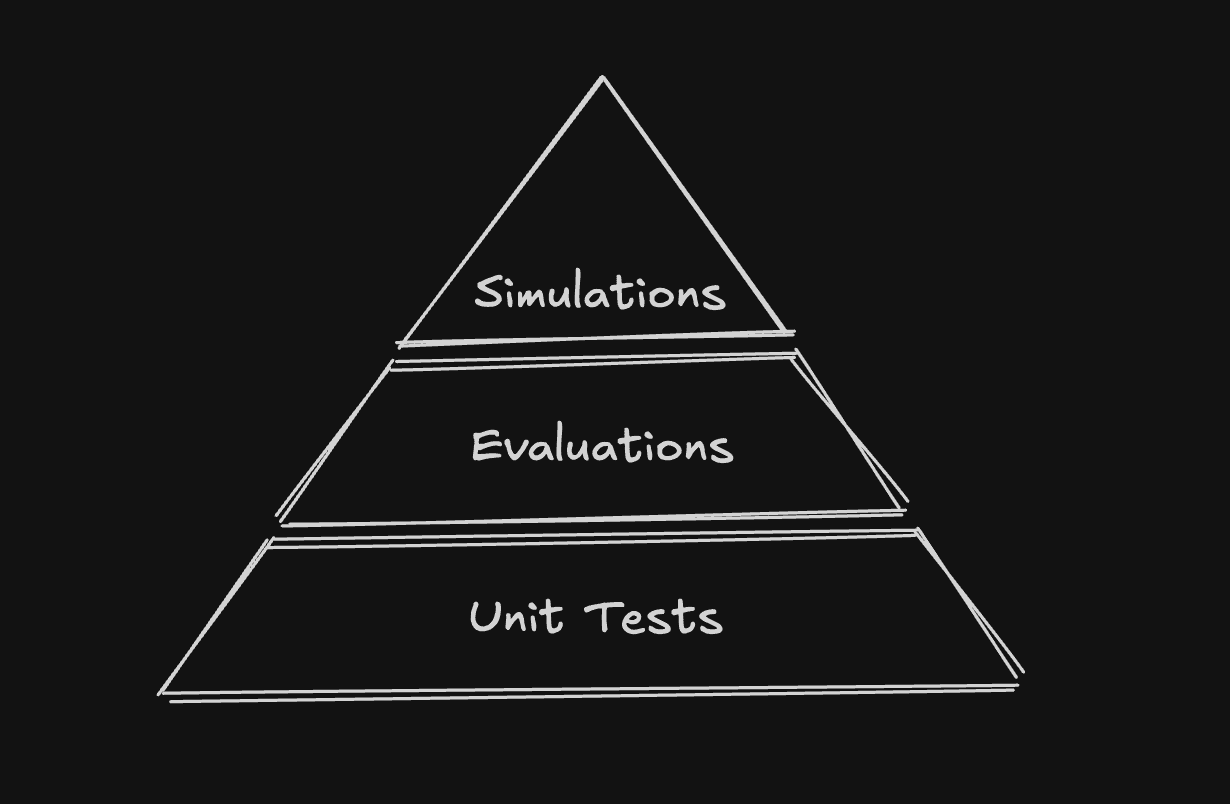

The testing pyramid for Agent Skills

Each level catches a different class of issues. Static validation catches structural problems. CLI adapter tests catch functional failures. Scenario simulations catch behavioral failures — the kind that only emerge in realistic, multi-turn usage.

Most teams stop at level 1 or 2. Moving to level 3 is where we've seen the biggest reduction in skills breaking in production.

LangWatch Scenario SDK is open source and available on npm and PyPI. If you're building agent skills and want to test them with full conversation simulations, the repo is here.