The Agent Development Lifecycle: Why shipping is the easy part

Manouk Draisma

Most AI agents look brilliant in demos. Then real users arrive, and things get complicated. Here's the systematic framework your team needs to keep agents reliable, safe, and genuinely improving in production.

95%of GenAI deployments fail to deliver measurable business impact | 40%of agentic AI projects predicted to be cancelled before delivering value | ~50%of organizations don't monitor their AI systems for drift or misuse | 5core lifecycle stages every production agent team must master |

|---|

Agents don't break at launch. They break after it.

There's a pattern we see constantly in AI teams: the demo goes well. The pilot succeeds. The stakeholders are excited. Then real users arrive, the data shifts, a model gets updated, and the agent that was performing beautifully last Tuesday starts giving subtly wrong answers on Monday. Nobody notices for two weeks.

This isn't a model problem. It isn't a prompt problem. It's a lifecycle problem. The team built something great, shipped it — and then had no systematic way to know whether it kept being great.

"The agent itself isn't breaking. It's the lifecycle around it."

Traditional software development has a convenient property: once you ship a feature, it stays the same. Tests pass, deployment succeeds, behavior is deterministic. AI agents break this assumption completely. An agent's outputs are shaped by prompts, model versions, tool outputs, retrieved context, and user input — all of which change constantly, often without any code release. A prompt tweak, a model update, a new class of user query: any of these can silently shift behavior in ways that are hard to detect and even harder to trace.

This is why the industry's failure rates are so striking. The problem isn't that teams can't build agents — it's that they haven't yet built the operational infrastructure to run them as long-lived products. Shipping is the easy part. Operating is where the real work begins.

The core insight

AI agents are living systems. They don't just need to be built well — they need to be operated with the same rigor and continuous attention you'd give a production microservice, a human team, or any other system where the world around it keeps changing.

Introducing the Agent Development Lifecycle

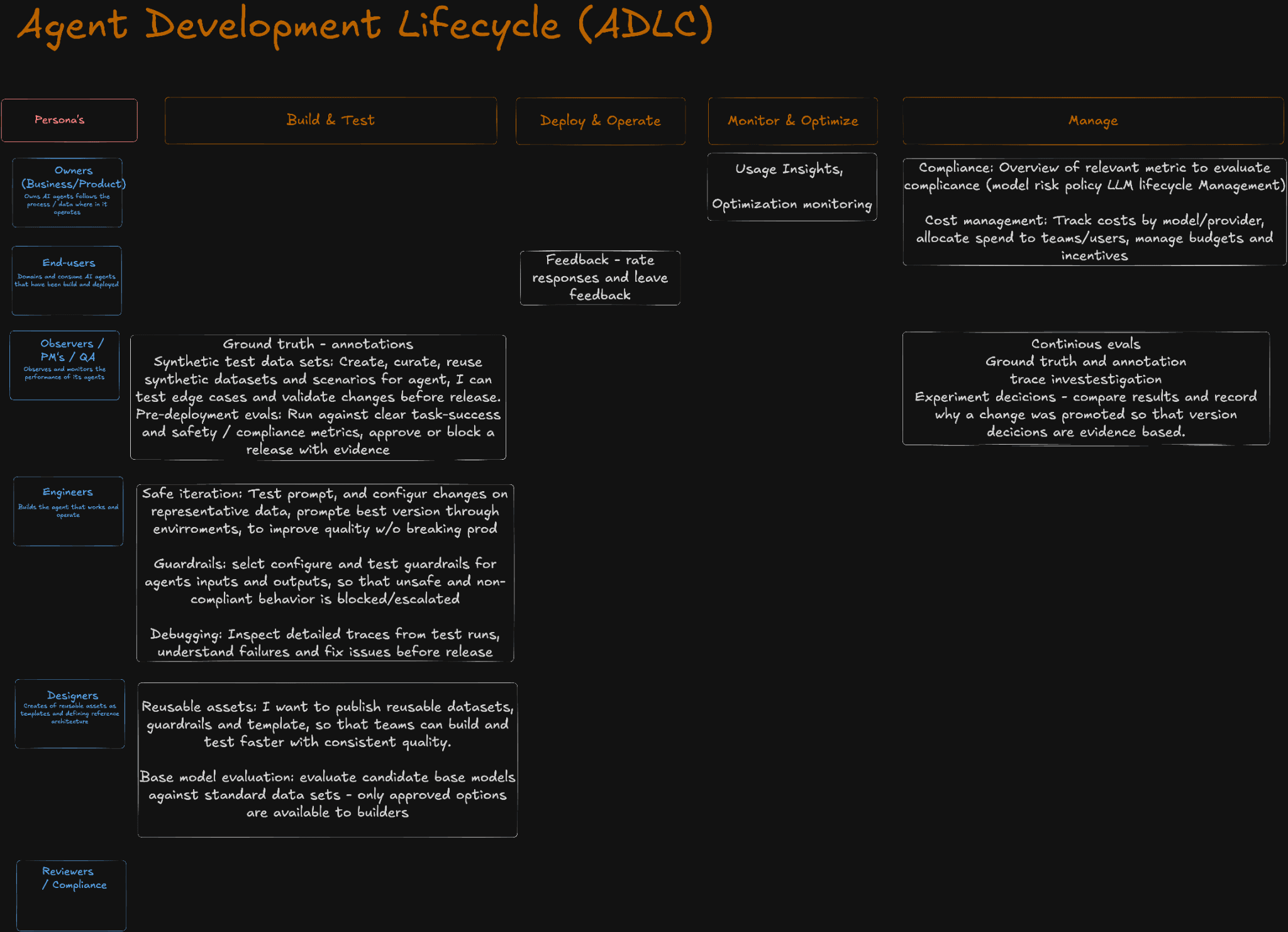

The Agent Development Lifecycle (ADLC) is a framework for thinking about AI agents not as features to ship but as products to operate. It maps the full journey from the moment an agent is conceived through continuous improvement in production — and critically, it names the people responsible at each stage.

The diagram above captures something important that most engineering teams overlook: the lifecycle isn't a waterfall. It's a loop. Every stage feeds into the next, and the Manage phase feeds back into Build & Test. The question isn't "have we launched?" — it's "are we getting better?"

The framework organizes work across five phases: Personas → Build & Test → Deploy & Operate → Monitor & Optimize → Manage. Each phase has specific outputs, specific owners, and specific failure modes when neglected. Let's break them down.

The six personas and why they all matter

One of the most under appreciated aspects of the ADLC is how explicitly it involves non-engineers. AI agents affect business outcomes, compliance obligations, and end-user trust — which means the people responsible for those things need to be part of the lifecycle, not just consulted at launch.

Owners Business and product leaders who define what the agent is for, what success looks like, and which data it operates on. They care about business outcomes, not tokens. | End-Users The people who actually interact with deployed agents. Their feedback — through ratings, free-text notes, and usage patterns — is the most honest signal of quality you'll get. | Observers / PM / QA The people who watch performance, create ground truth annotations, write test scenarios, and decide whether a release is ready. They are the conscience of the lifecycle. |

Engineers The builders. They configure prompts, set up guardrails, test across environments, debug traces, and own the mechanics of safe iteration without breaking production. | Designers Architects of reusable assets: standard datasets, guardrail templates, and reference architectures. They ensure teams build fast without sacrificing consistency or quality. | Reviewers / Compliance The gatekeepers of responsible deployment. They ensure agents meet regulatory requirements, model risk policies, and safety standards before and after launch. |

Getting these personas aligned — with shared visibility into the same data — is often the difference between an agent that keeps improving and one that quietly degrades until someone complains loudly enough.

The four phases, in depth

Phase 1: Build & Test

This is where engineering and QA teams define what the agent should do and validate it before anyone real touches it. But "testing" in the agent world is fundamentally different from traditional QA. You can't write a unit test that captures whether a response is helpful. You need scenarios.

The Build & Test phase for a well-run team includes: ground truth annotations (manually labelled examples of good and bad behavior), synthetic test datasets that cover edge cases the agent hasn't seen, and pre-deployment evaluations that run the agent against task-success and safety metrics before release. Critically, this phase doesn't end with launch — it feeds continuously into the Manage phase as new production failures surface.

01 Ground Truth | Annotations and canonical examples Human-labelled examples of correct, incorrect, and edge-case outputs. The gold standard that all evaluation flows from. |

02 Synthetic Data | Curated test scenarios A controlled set of scenarios that cover the cases you care about. Not thousands of auto-generated prompts, but a few dozen well-thought-out ones that you actually read. |

03 Pre-Deploy Evals | Evidence-based release gates Run against clear task-success and safety metrics. Every release is either approved or blocked with evidence, not with intuition. |

04 Safe Iteration | Prompt and config testing across environments Test changes on representative data across dev, staging, and production, promoting only what improves quality without introducing regressions. |

05 Guardrails | Input and output safety controls Configurable policies that block or escalate unsafe, non-compliant, or out-of-scope agent behavior before it reaches users. |

06 Debugging | Detailed trace inspection When something goes wrong, you need to see exactly what happened: which tools fired, what was retrieved, what the model reasoned about, and where the chain broke. |

Phase 2: Deploy & Operate

The deployment question isn't just "how do we get it live?" It's "how do we get it live in a way we can roll back, audit, and control?" Agents need versioning not just for code, but for prompts, tools, model selections, and guardrail configurations. A prompt change is a release.

End-users are also an active part of this phase. The ability to rate responses and leave feedback isn't a nice-to-have — it's a primary signal channel. User feedback is often the fastest way to surface failures that automated evals miss, because it captures the felt quality of an interaction, not just its technical correctness.

Phase 3: Monitor & Optimize

Monitoring for agents means something richer than uptime and latency. It means tracking usage patterns, understanding which scenarios users hit most, identifying where the agent escalates or fails, and building the kind of insight that lets teams make informed decisions about what to improve next.

This is also where optimization happens — not based on gut feeling, but on data. Which prompt variation performed better across real user queries? Which tool call pattern correlates with user dissatisfaction? Which topics cause the most hallucinations? You can't answer these questions without systematic observability.

Phase 4: Manage

The Manage phase is what separates teams running agents as products from teams running agents as experiments. It includes continuous evals against production data (not just the test set you built last quarter), ground truth annotation pipelines that keep improving as the agent encounters new situations, and experiment tracking that records why each version was promoted — so decisions are evidence-based, not anecdotal.

Compliance also lives here. Managing an agent means maintaining an overview of which metrics indicate model risk, tracking costs by model and provider, and ensuring that the agent remains within the policy constraints your organization has set. As regulatory scrutiny of AI systems increases, this isn't optional — it's existential.

Four principles that separate good teams from great ones

The ADLC gives you structure. These principles give you judgment — the kind that determines whether the structure actually produces better agents or just more process.

Systematic quality over vibe-checking

Most teams still evaluate agents by sending messages and eyeballing the results. This is necessary but not sufficient. Vibe-checking captures human intuition — but intuition doesn't scale, doesn't persist across releases, and can't tell you which of two prompt variants is 12% better on task completion. Build the systematic layer: simulations, evaluations, defined iteration processes. Then use vibe-checking to feed it with new scenarios, not to replace it.

Few well-thought scenarios over thousands of auto-generated ones

It's tempting to auto-generate thousands of test cases and let an AI evaluate them. The problem: you'll never read them. A set of ten carefully designed scenarios, reviewed by engineers, domain experts, and product managers, creates genuine trust in the agent's behavior. Auto-generated coverage is valuable for finding new edge cases — but it shouldn't replace the humanly-readable core test suite that your whole team can reason about.

Business metrics over technical ones

Latency matters. Token cost matters. But the reason you built the agent is to deliver business value — and that's what your evaluation should ultimately be anchored to. Proxy metrics like escalation rate, user acceptance of suggestions, or task completion without human intervention get you much closer to the real signal than BLEU scores ever will. Teams that focus on business metrics, even when they're hard to define, consistently build better agents than those that optimize purely for technical proxies.

Incremental improvement over premature complexity

The agents with the highest production success rates almost always started simple. One model, one tool, one job done well — then expanded from there. Teams that begin with an orchestrator agent, fifteen subagents, and forty-two tools are building something they can't control before they've learned what needs controlling. Start with a single, well-defined capability. Launch it. Measure it. Prove it works. Then add the next layer. Complexity that isn't grounded in production evidence is a liability, not an asset.

Where LangWatch fits in the ADLC

LangWatch is built around the conviction that better agents come from systematic, evidence-based iteration — not from having the best prompts on day one. The platform is designed to support every phase of the lifecycle described above, giving the different personas in your organization the visibility and control they need to act.

For engineers, that means trace-level debugging and safe prompt iteration across environments. For QA and PMs, it means continuous evaluation pipelines anchored to ground truth annotations and business metrics. For compliance and owners, it means cost tracking by model and provider, and the audit trail needed to demonstrate responsible deployment.

The goal isn't to add more tooling on top of an already fragmented stack. It's to connect observability, evaluation, and iteration into a single loop — so that every change your team makes is grounded in evidence, every regression is caught before it reaches users, and every version promoted is one you can actually explain.

"Ship agents with confidence, not crossed fingers."

The ADLC isn't a framework you implement once. It's a practice you build. The teams that get there — who have systematic evals, meaningful observability, and clear feedback loops from users to engineers — consistently outperform those that treat agent quality as something you assess at launch and then assume stays constant.

The hardest part of running AI agents in production isn't building them. It's keeping them good. That's the work the Agent Development Lifecycle exists to make systematic, repeatable, and eventually something your whole organization can trust.

Ready to close the loop?

LangWatch helps teams at every stage of the Agent Development Lifecycle, from pre-deployment evals to continuous production monitoring. Book a demo and learn more.