Documentation Index Fetch the complete documentation index at: https://langwatch.ai/docs/llms.txt

Use this file to discover all available pages before exploring further.

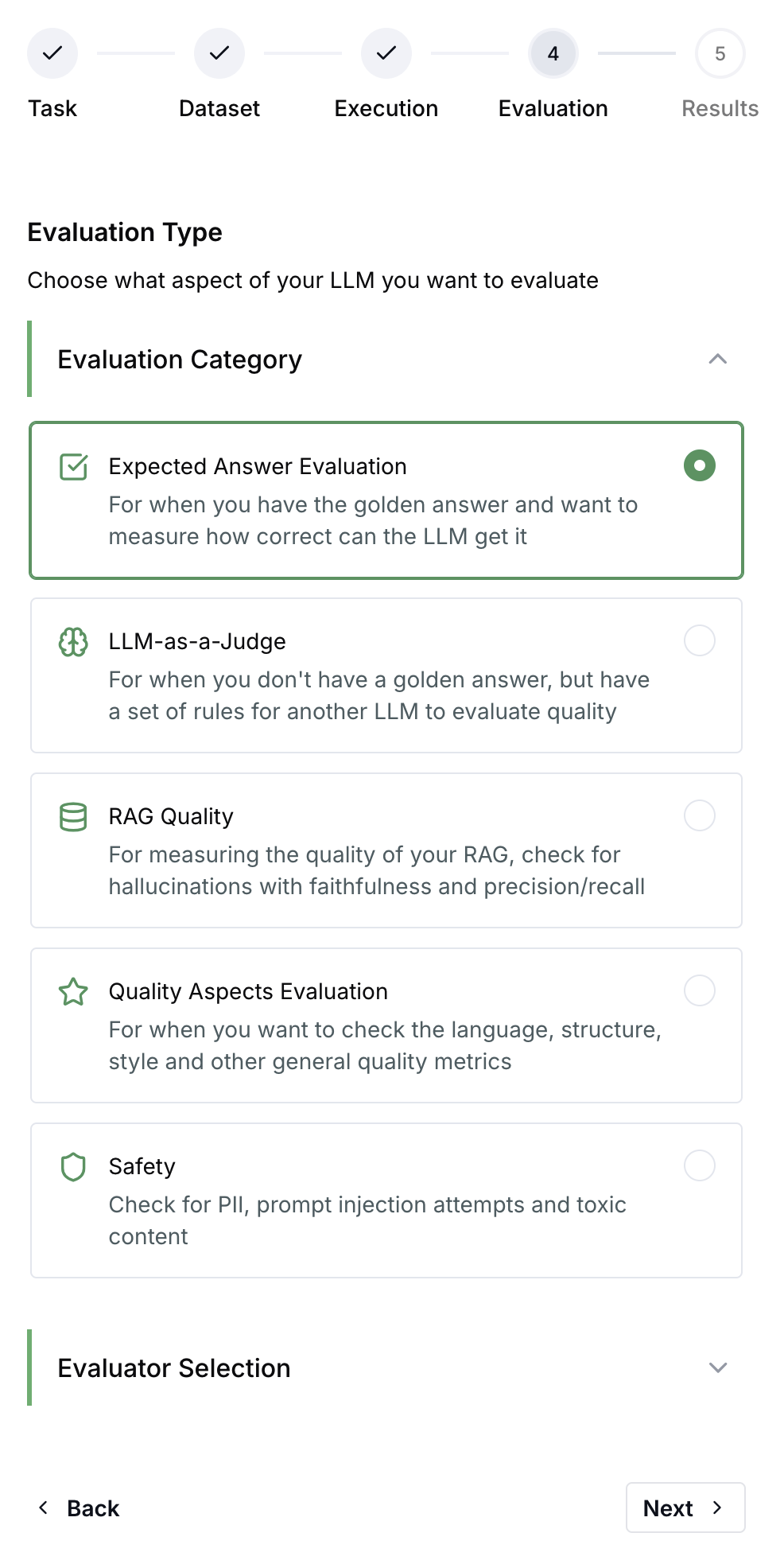

Experiments let you systematically test your LLM applications before deploying to production. Run your prompts, models, or agents against datasets and measure quality with evaluators.

What is an Experiment? An experiment consists of three components:

Dataset - A collection of test cases with inputs (and optionally expected outputs)Target - What you’re testing: a prompt, model, API endpoint, or custom codeEvaluators - Scoring functions that assess output quality

When you run an experiment, LangWatch executes your target on each dataset row and scores the results with your selected evaluators.

When to Use Experiments

Before deploying - Validate prompt changes don’t regress qualityComparing options - Test different models, prompts, or configurations side-by-sideCI/CD gates - Automatically block deployments that fail quality thresholdsBenchmarking - Track quality metrics over time across experiment runs

Getting Started Choose your preferred approach:

Quick Example import langwatch # Load your dataset df = langwatch.dataset.get_dataset( "my-dataset" ).to_pandas() # Initialize experiment evaluation = langwatch.experiment.init( "prompt-v2-test" ) # Run through dataset for idx, row in evaluation.loop(df.iterrows()): # Execute your LLM response = my_llm(row[ "input" ]) # Run evaluators evaluation.evaluate( "ragas/faithfulness" , index = idx, data = { "input" : row[ "input" ], "output" : response, "contexts" : row[ "contexts" ], }, )

import { LangWatch } from "langwatch" ; const langwatch = new LangWatch (); // Load dataset const dataset = await langwatch . datasets . get ( "my-dataset" ); // Initialize experiment const evaluation = await langwatch . experiments . init ( "prompt-v2-test" ); // Run through dataset await evaluation . run ( dataset . entries . map ( e => e . entry ), async ({ item , index }) => { // Execute your LLM const response = await myLLM ( item . input ); // Run evaluators await evaluation . evaluate ( "ragas/faithfulness" , { index , data: { input: item . input , output: response , contexts: item . contexts , }, }); }, { concurrency: 4 } );

Experiment Results After running an experiment, you can:

Compare runs - See how different configurations perform side-by-sideDrill into failures - Inspect individual test cases that scored poorlyTrack trends - Monitor quality metrics across experiment runs over timeExport data - Download results for further analysis

CI/CD Integration Run experiments automatically in your deployment pipeline:

# GitHub Actions example - name : Run quality experiments run : | python -c " import langwatch result = langwatch.experiment.run('my-experiment') result.print_summary() " env : LANGWATCH_API_KEY : ${{ secrets.LANGWATCH_API_KEY }}

Learn more about CI/CD integration .

Next Steps

Answer Correctness Tutorial